In an ideal world, people would be able to see how the clothing looks on their own image, and then try on various sizes to recreate a true fitting room experience.

These all follow the launch in 2021 of what it calls its “Shopping Graph”, which uses AI to surface recommendations from the billions of free-to-list products, and replaces its standalone shopping app.

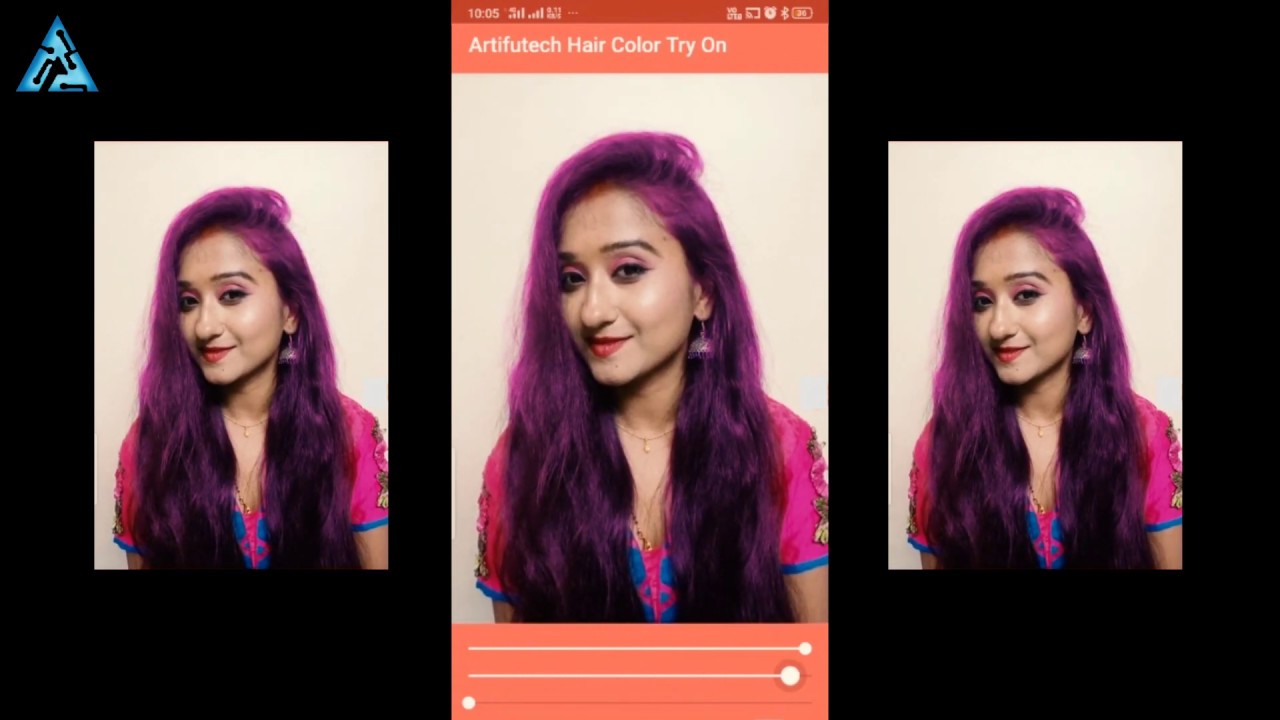

Last year, it added the ability for people to visualise lipstick and foundation on a range of models. In recent months, Google has also used generative AI to add the ability for advertisers to tweak advertising imagery to make it more compelling, and introduced a Search Generative Experience (SGE) that responds to product searches with additional details such as top product considerations and product summaries with key features, pricing and stockists. Vue.ai, another tech startup, has also provided this type of technology, and recently partnered with Meta to provide it to Meta’s advertisers. Critics responded that it was unethical to depict fictional people in place of live human models Levi’s responded that it would be physically impossible to photograph every individual stock-keeping unit on someone of each size and shape. A recent project by Levi’s had a similar aim to show items fitted on a more diverse range of people, working with tech startup Lalaland.ai that generates fictional people using AI. This stands to be viewed as more “ethical” as it is digitally dressed on images of existing people. Rincon says that Google is especially conscious to note that the models being digitally dressed are real people that Google hired and photographed, and is considering adding their first names to the user interface to further emphasise that point. It’s not Photoshop.” Challenges aren’t just technicalĪI-generated content regarding humans is still seen as controversial, and complicated technically. “This takes an image and an image and uses a diffusion model to put the asset onto our models. Rincon says that Google’s approach uses generative AI in a way that hasn’t been done before, and is not another version of digitally “copy and pasting” assets on to model photos using geometric warping or other tech. Bods, which has worked with brands including Khaite, enables shoppers to build and customise avatars in their own image, then see how digital twins of the clothes fit on that avatar. Last year, Farfetch acquired augmented reality try-on company Wannaby (which just launched a pilot with Maison Valentino ready-to-wear). Zeekit, which also uses photographs of human models to show online fit, was acquired by Walmart in 2021. In 2019, Meta developed tech that uses AI to analyse outfit images and make adjustments to make it more stylish.

As early as 2017, Amazon acquired Body Labs, which makes 3D models for virtual try-on. They have often turned to AI, which can generate realistic-looking images based on text- or image-based prompts. Tech startups have been partnering with brands for more than a decade to attempt to solve these challenges. There’s also the consumer side: 42 per cent of online shoppers don’t feel represented by the images of models they see in e-commerce and 59 per cent feel dissatisfied with an item they shopped for online because it looked different on them than expected, ac cording to a 2023 Google and Ipsos survey of US online shoppers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed